Some time ago we wrote a post about alt texts and why they’re important in web development. At the same time, our development team thought about how the process could be made simpler for content managers in our CMS, Sanity.io. We decided to use a machine learning solution already available on the market to generate alts for us. In this blog post, I wanted to walk you through our journey in the hope that it might be useful to you too.

Image recognition choice

As a first step I did research on which image recognition solution is best suited for the job. I searched for something that will provide me with whole sentences, not just a list of tags and words. I also checked how different services described the same set of template pictures - if they’re correct, if they attempted to describe an abstract background image or icon, or if it will recognize it as some sort of background pattern.

Our choice - Computer Vision from Microsoft Azure

I went through more or less every available solution, finding that they were all great, but Computer Vision from Microsoft Azure gave me really great sentences with tags in one request. I found that in around 75% of the cases that I tested it on (some of them were really tricky) it gave me an impressive output, rather than random, bot-like results. An added bonus is that it has a pretty big free plan so it’s usable in hobby projects, and you can test it on your own without spending a single penny - 5000 requests per month are free.

Implementing recognition API in Sanity.io

After finding the best solution for my project I thought about how to implement it in Sanity.io. For those who don't know what Sanity is - it's a CMS platform for static pages with a very clear and user-friendly interface. I thought about creating some sort of plugin but unfortunately I couldn't find any hook for an image upload event in the built-in field. I could write my own custom block with necessary fields from scratch, but I didn't want to reinvent the wheel and I thought there must be another way.

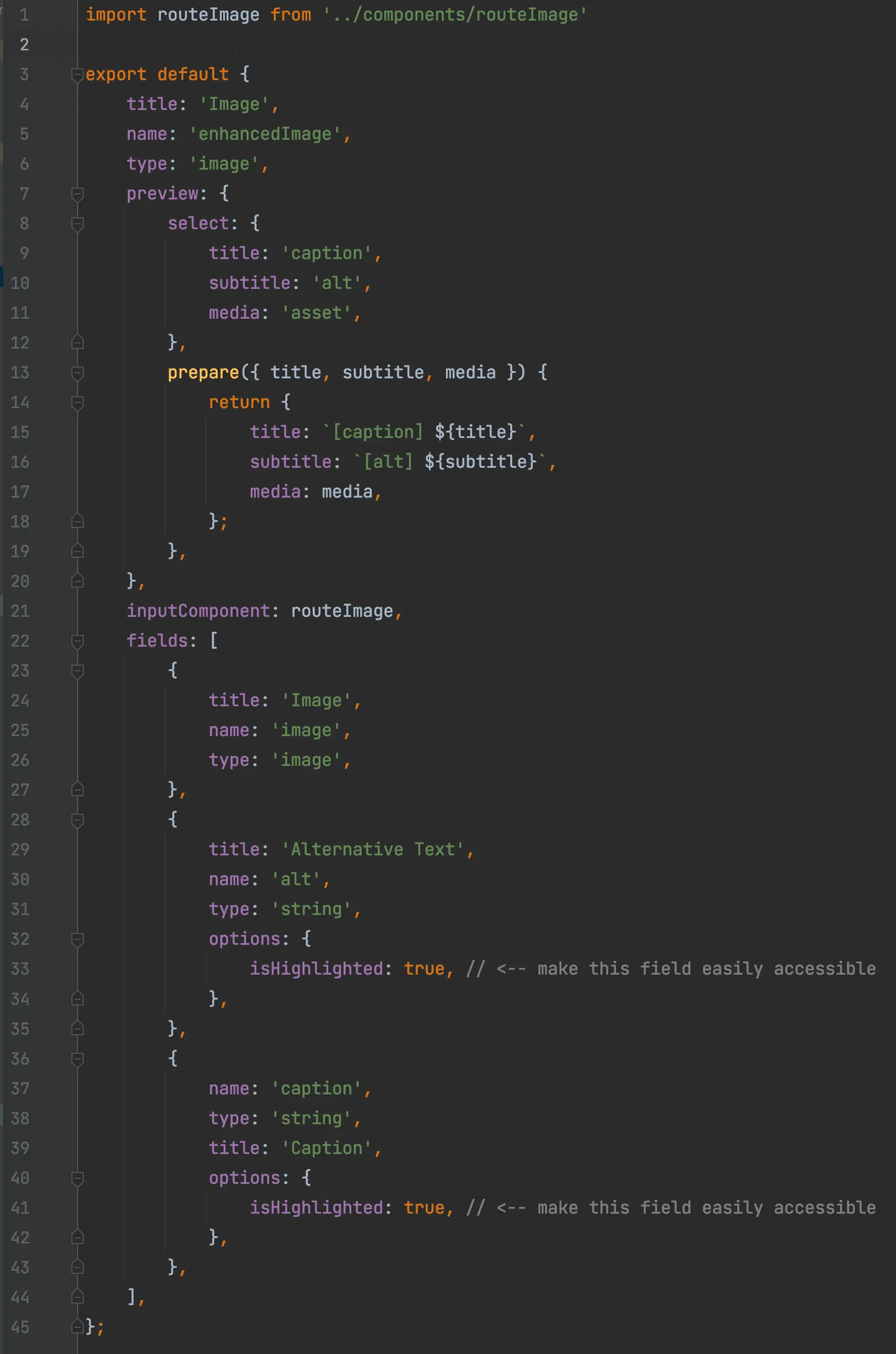

Fortunately Sanity has an option to create a custom input component which can be routed through a js file and connected to a few fields at the same time. It also has access to the patch event which was triggered on image upload.

Another obstacle to cross is a schema that is easy to add to already existing code and easy to delete if we decide not to use this solution anymore, without crashing data. One, fairly complex solution is to create another image typed field inside the existing image typed schema which will be passing the reference up (in this case to “enhancedImage”). Now if we delete “inputComponent” nothing will break and Sanity won’t show us a mismatch in types.

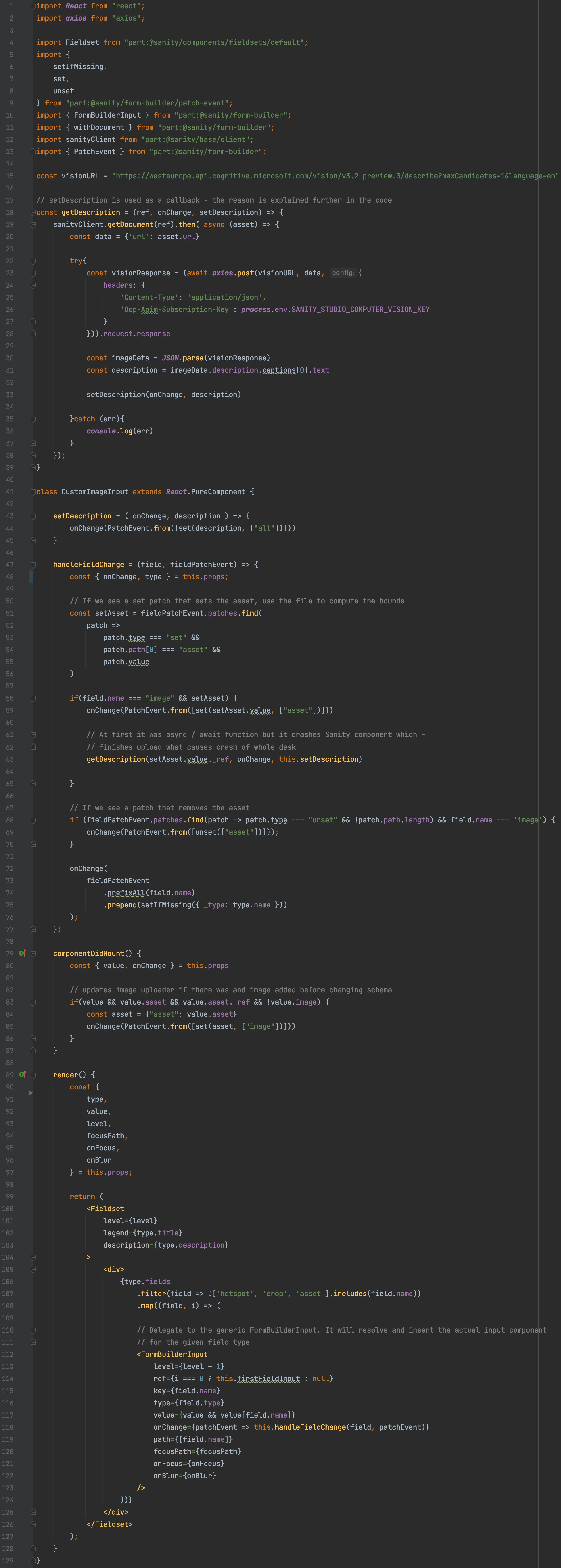

Now we need to create a React component (Sanity natively uses React.js) which will listen to patch events and will update our alternative text field with response from recognition API and will pass references between image fields. Unfortunately Sanity Desk doesn't like async / await functions and it tends to crash, so I didn't use it on “handleFieldChange” but we can use it later on in the function “getDescription” whis is calling the API. I won’t describe each import and function - if you’re interested in reading about this in more detail, everything is fully described in Sanity.io documentation.

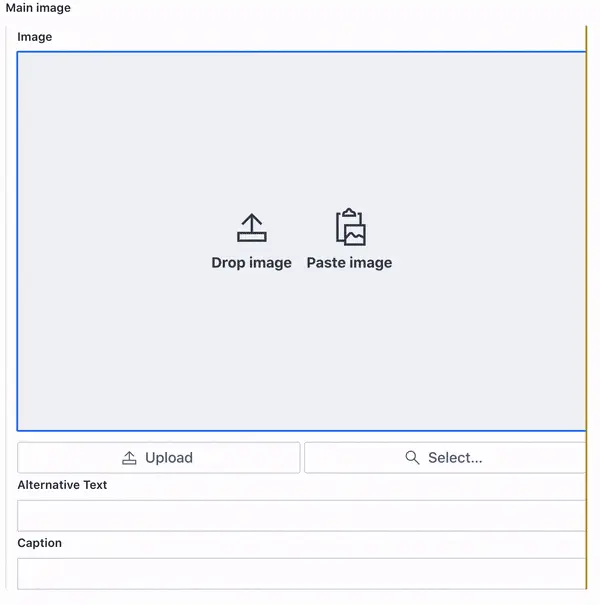

And there you have it! The final result should look like this:

The result? A user-friendly Machine Learning Solution

Now, all that remains is for your web team and your content managers to start using it!